5 Practical Techniques to Detect and Mitigate LLM Halluci...

My friend who is a developer once asked an LLM to generate documentation for a payment API.

What’s Happening

So get this: My friend who is a developer once asked an LLM to generate documentation for a payment API.

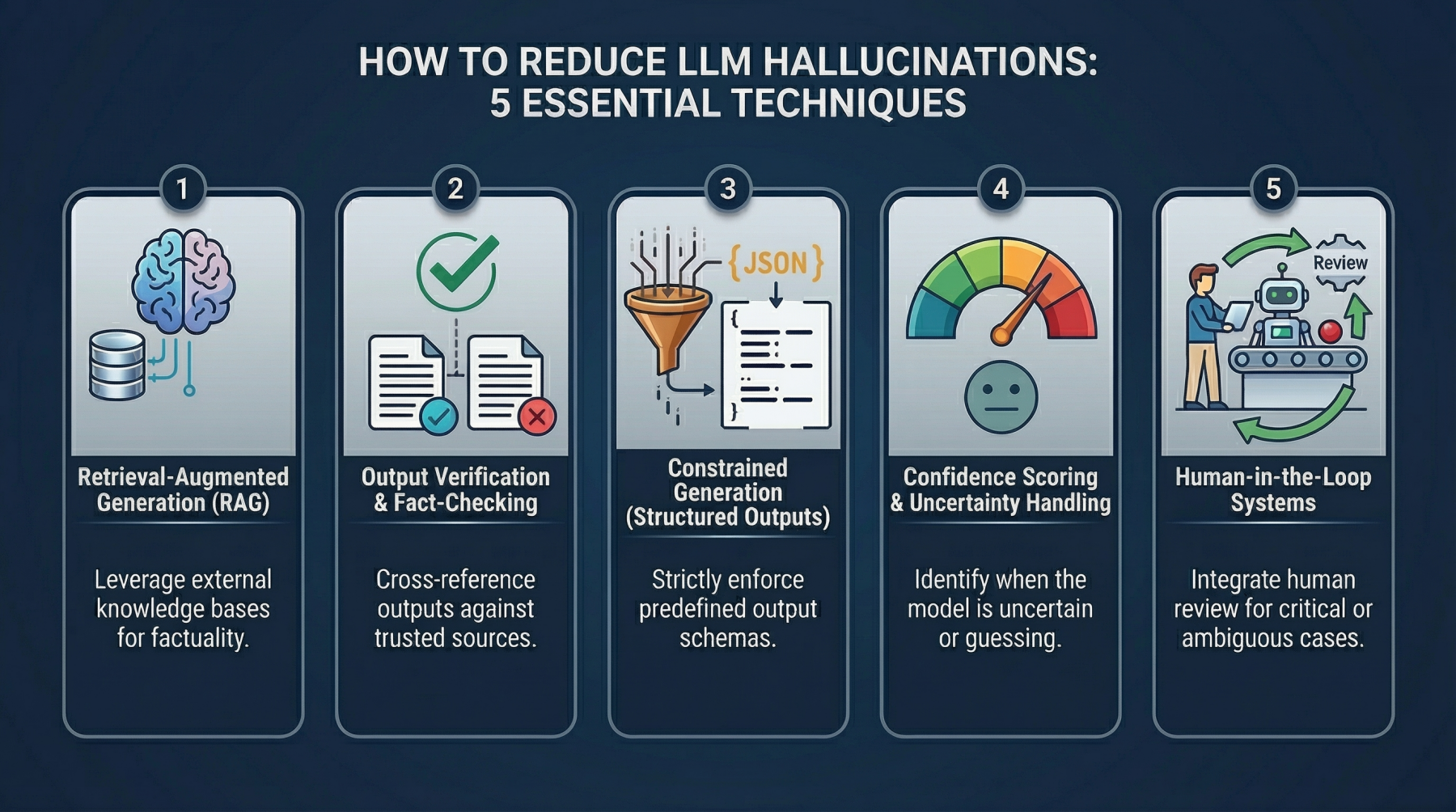

5 Practical Techniques to Detect and Mitigate LLM Hallucinations Beyond Prompt Engineering By Shittu Olumide on in Language Models 2 Post In this article, you will learn why large language model hallucinations happen and how to reduce them using system-level techniques that go beyond prompt engineering. Topics we will cover include: What causes hallucinations in large language models. (wild, right?)

Five practical techniques for detecting and mitigating hallucinated outputs in production systems.

The Details

How to implement these techniques with simple examples and realistic design patterns. It had a clean structure, the right tone, and even example endpoints.

The model had confidently invented endpoints, parameters, and responses that felt real enough to pass a quick review. It was only caught when someone tried to integrate it, and nothing worked.

Why This Matters

That is what hallucination looks like in practice. The model makes things up and presents them as facts, without any signal that something is wrong. It shows up in subtle ways across production systems.

The AI space continues to evolve at a wild pace, with developments like this becoming more common.

Key Takeaways

- Fake citations in research tools.

- Nonexistent product features in customer support responses.

- In isolation, these might seem like small errors.

- At grow, they become serious problems.

The Bottom Line

This helps, but only up to a point. Prompts can guide the model, but they do not fundamentally change how it generates answers.

What’s your take on this whole situation?

Daily briefing

Get the next useful briefing

If this story was worth your time, the next one should be too. Get the daily briefing in one clean email.

Reader reaction