A New Google AI Research Proposes Deep-Thinking Ratio to ...

For the last few years, the AI world has followed a simple rule: if you want a Large Language Model (LLM) to solve a harder problem, make...

What’s Happening

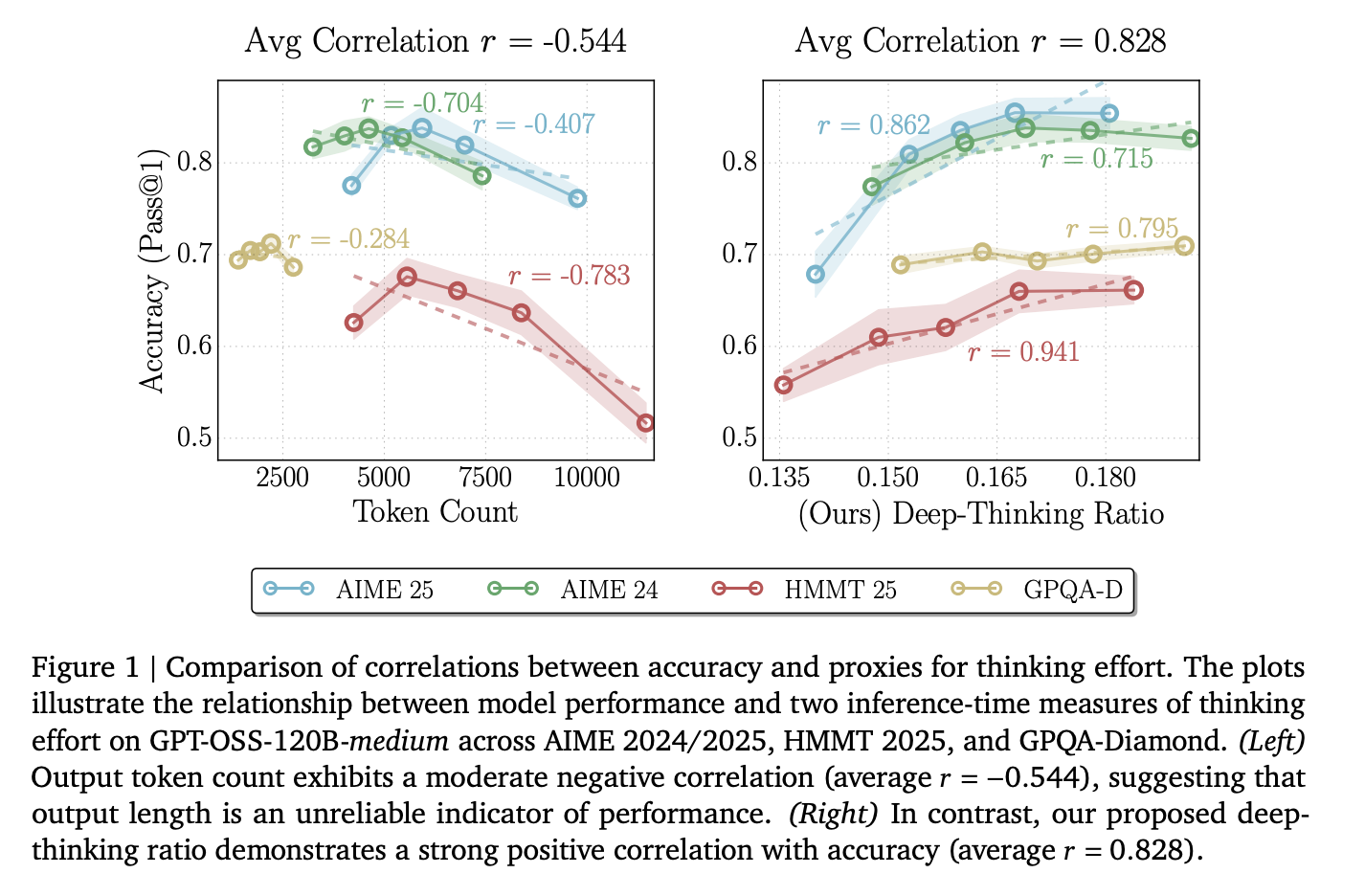

So get this: For the last few years, the AI world has followed a simple rule: if you want a Large Language Model (LLM) to solve a harder problem, make its Chain-of-Thought (CoT) longer.

But new research from the University of Virginia and Google proves that thinking long is not the same as thinking hard. (shocking, we know)

The research team [] The post A New Google AI Research Proposes Deep-Thinking Ratio to Improve LLM Accuracy While Cutting Total Inference Costs by Half appeared first on Ma For the last few years, the AI world has followed a simple rule: if you want a Large Language Model (LLM) to solve a harder problem, make its Chain-of-Thought (CoT) longer.

Why This Matters

The AI space continues to evolve at a wild pace, with developments like this becoming more common.

As AI capabilities expand, we’re seeing more announcements like this reshape the industry.

The Bottom Line

This story is still developing, and we’ll keep you updated as more info drops.

Is this a W or an L? You decide.

Daily briefing

Get the next useful briefing

If this story was worth your time, the next one should be too. Get the daily briefing in one clean email.

Reader reaction