Everything You Need to Know About Recursive Language Models

If you are here, you have probably heard about recent work on recursive language models.

What’s Happening

So basically If you are here, you have probably heard about recent work on recursive language models.

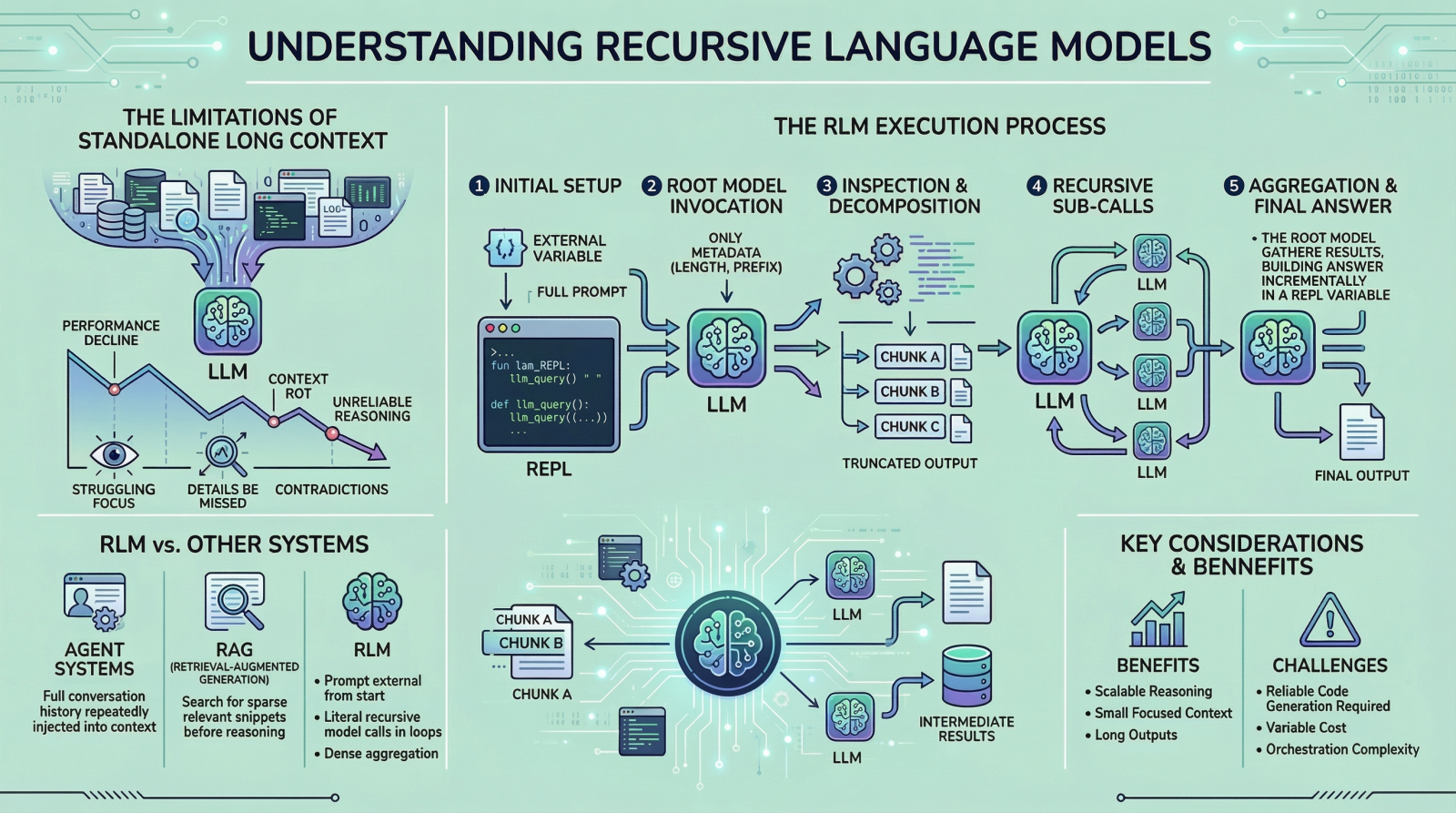

Everything You Need to Know About Recursive Language Models By Bala Priya C on in Language Models 0 Post In this article, you will learn what recursive language models are, why they matter for long-input reasoning, and how they differ from standard long-context prompting, retrieval, and agentic systems. Topics we will cover include: Why long context alone does not solve reasoning over large inputs How recursive language models use an external runtime and recursive sub-calls to process information The main tradeoffs, limitations, and practical use cases of this approach Lets get right to it. (yes, really)

The idea has been trending across LinkedIn and X, and it led me to study the topic more deeply and what I learned with you.

The Details

I think we can all agree that large language models (LLMs) have improved rapidly over the past few years, especially in their ability to handle large inputs. This progress has led many people to assume that long context is largely a solved problem, but it is not.

If you have tried giving models long inputs close to, or equal to, their context window, you might have noticed that they become less reliable. They often miss details present in the provided information, contradict earlier statements, or produce shallow answers instead of doing careful reasoning.

Why This Matters

This issue is often referred to as “context rot” , which is quite an interesting name. Recursive language models (RLMs) are a response to this problem. Instead of pushing more and more text into a single forward pass of a language model, RLMs change how the model interacts with long inputs in the first place.

The AI space continues to evolve at a wild pace, with developments like this becoming more common.

The Bottom Line

Instead of pushing more and more text into a single forward pass of a language model, RLMs change how the model interacts with long inputs in the first place. In this article, we will look at what they are, how they work, and the kinds of problems they are designed to solve.

What’s your take on this whole situation?

Daily briefing

Get the next useful briefing

If this story was worth your time, the next one should be too. Get the daily briefing in one clean email.

Reader reaction