I don't pay for ChatGPT, Perplexity, Gemini, or Claude – ...

There's no point in relying on AI tools when my local LLMs can handle everything I don't pay for ChatGPT, Perplexity, Gemini, or Claude –...

What’s Happening

Breaking it down: There’s no point in relying on AI tools when my local LLMs can handle everything I don’t pay for ChatGPT, Perplexity, Gemini, or Claude – I stick to my self-hosted LLMs instead By Ayush Pande Published Feb 27, 2026, 1:00 PM EST Ayush Pande is a PC hardware and gaming writer.

When he’s not working on a new article, you can find him with his head stuck inside a PC or tinkering with a server operating system. Besides computing, his interests include spending hours in long RPGs, yelling at his friends in co-op games, and practicing guitar. (yes, really)

Sign in to your XDA account Add Us On follow Follow followed Followed Like Like Thread Log in Here is a fact-based summary of the story contents: Try something different: Show me the facts Explain it like I’m 5 Give me a lighthearted recap Although AI tools are a godsend for tedious tasks, I must admit that I’m not the biggest fan of cloud-based LLM providers.

The Details

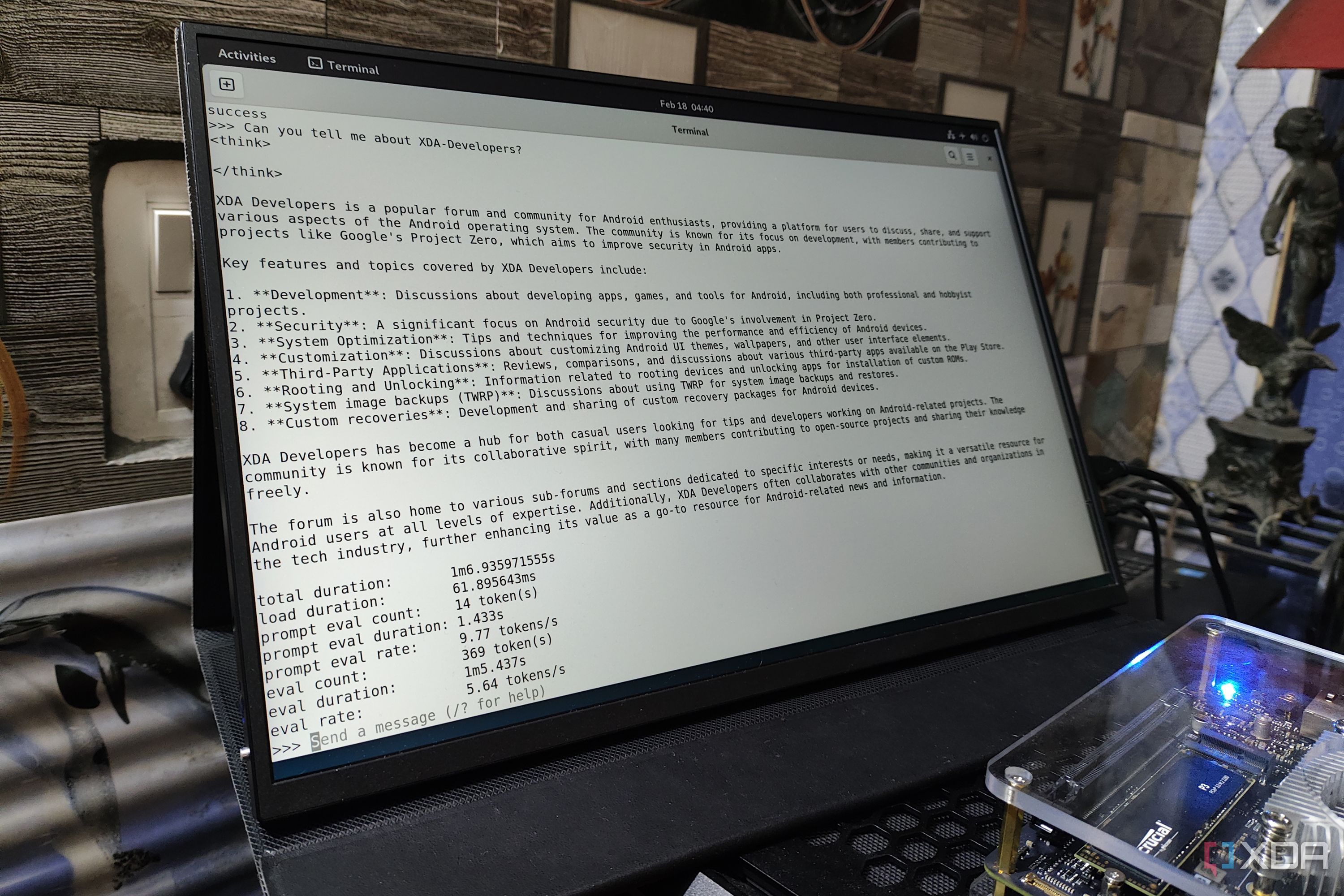

Sure, their automation and productivity features are pretty handy, but between their lack of privacy and premium API plans, the prospect of relying on cloud platforms never sat well with me. Fortunately, I came across Ollama around the same time I kicked off looking into FOSS self-hosted apps, and after experimenting with a multitude of LLMs across different graphics cards, my local language models are majorly more useful than their cloud counterparts – to the point where I don’t need to spend money on ChatGPT, Perplexity, Gemini, Claude, or any other AI providers.

Related Nvidia stopped supporting my GPU, so I kicked off self-hosting LLMs with it I self-support my gpu now because Nvidia clutched’t Posts 8 By Ayush Pande I d rather not private info with cloud platforms And I get to save money, too When I talk about AI tools, I don’t mean simple conversational models. What I fr need are the reasoning capabilities of LLMs, as they can tackle a variety of annoying tasks that would take hours of monotonous work.

Why This Matters

This is part of the broader shift happening across the tech industry right now.

Tech companies have been making moves like this as competition heats up.

The Bottom Line

This story is still developing, and we’ll keep you updated as more info drops.

What do you think about all this?

Daily briefing

Get the next useful briefing

If this story was worth your time, the next one should be too. Get the daily briefing in one clean email.

Reader reaction