New SemiAnalysis InferenceX Data Shows NVIDIA Blackwell U...

The NVIDIA Blackwell platform has been widely adopted by leading inference providers such as Baseten, DeepInfra, Fireworks AI and Togethe...

What’s Happening

Breaking it down: The NVIDIA Blackwell platform has been widely adopted by leading inference providers such as Baseten, DeepInfra, Fireworks AI and Together AI to reduce cost per token by up to 10x.

Now, the NVIDIA Blackwell Ultra platform is taking this momentum further for agentic AI. AI agents and coding assistants are driving explosive growth in software-programming-related Read Article New SemiAnalysis InferenceX Data Shows NVIDIA Blackwell Ultra Delivers up to 50x Better Performance and 35x Lower Costs for Agentic AI Cloud providers including Microsoft, CoreWeave and Oracle Cloud Infrastructure are deploying NVIDIA GB300 NVL72 systems at grow for low-latency and long-context use cases such as agentic coding and coding assistants. (it feels like chaos)

By Ashraf Eassa The NVIDIA Blackwell platform has been widely adopted providers such as Baseten, DeepInfra, Fireworks AI and Together AI to reduce cost per token 10x.

The Details

These applications require low latency to maintain real-time responsiveness across multistep workflows and long context when reasoning across entire codebases. New SemiAnalysis InferenceX performance data shows that the combination of NVIDIA’s software optimizations and the next-generation NVIDIA Blackwell Ultra platform has delivered breakthrough advances on both fronts.

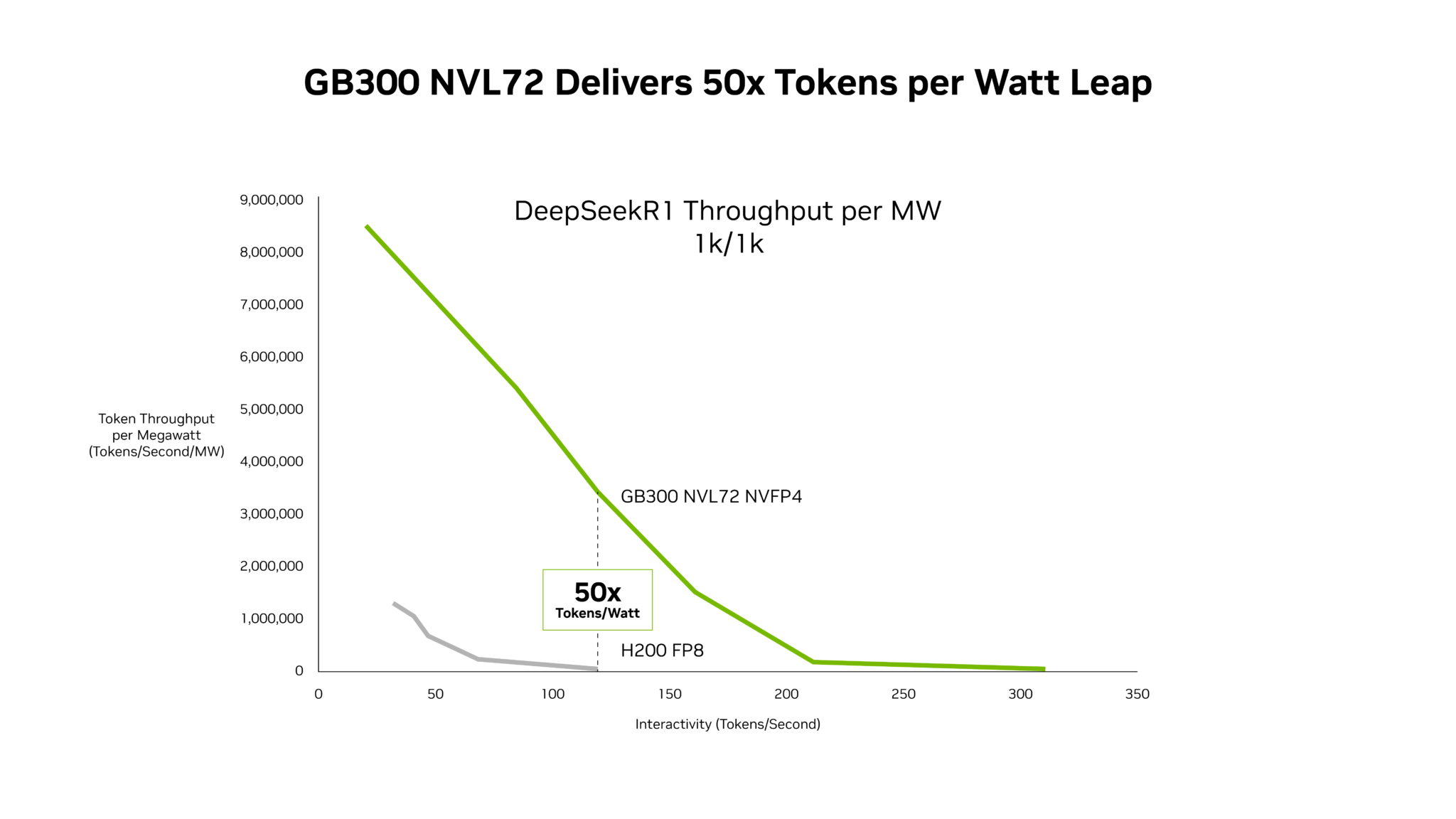

NVIDIA GB300 NVL72 systems now deliver up to 50x higher throughput per megawatt, resulting in 35x lower cost per token compared with the NVIDIA Hopper platform. Chips, system architecture and software, NVIDIA’s extreme codesign accelerates performance across AI workloads — from agentic coding to interactive coding assistants — while driving down costs at grow.

Why This Matters

GB300 NVL72 Delivers up to 50x Better Performance for Low-Latency Workloads Recent analysis from Signal65 shows that NVIDIA GB200 NVL72 with extreme hardware and software codesign delivers more than 10x more tokens per watt, resulting in one-tenth the cost per token compared with the NVIDIA Hopper platform. These massive performance gains continue to expand as the underlying stack improves.

This adds to the ongoing AI race that’s captivating the tech world.

The Bottom Line

This story is still developing, and we’ll keep you updated as more info drops.

Sound off in the comments.

Daily briefing

Get the next useful briefing

If this story was worth your time, the next one should be too. Get the daily briefing in one clean email.

Reader reaction